Can We Speed Up Browser Evolution?

So I just read the statement from the Mozilla Foundation which predicts 10% of the world’s web browsers will be Mozilla-based by the end of 2005. While some people seem pretty excited about this development, I can’t help but wonder if we are settling for too little here. 15 months? 10%? By comparison, every time a new version of the Flash plug-in is released, we get a predictable 80-90% penetration rate at the 15 month mark. Why can’t we expect this sort of development pace with browsers? Several reasons… some perhaps solvable and some perhaps not. This article will discuss several of the issues involved and recommend possible solutions.

Leadership and control

One of the biggest factors in the upgrade cycle differences between Flash and open source browsers like Mozilla is the lack of absolute control in open source development. When Macromedia sits down every year to plan their budgets and product releases, they need only to decide on an amount of money to invest in Flash development and a feature set. After these two decisions are made, the Flash team has their marching orders and they can begin setting things like release dates and migration strategies. Every member of the Flash team is paid a salary for their full-time dedication to the product over the next several months and since most Macromedia employees are in the same location, workplace synergies help to speed and enhance the development process.

Contrast this to the open source development process of the Mozilla Foundation. Mozilla, like all open source projects, requires the individual contributions of people who, for the most part, butter their bread elsewhere in the internet economy. While there are a handful of people working full-time on the project, a large amount of the contributions are made from people who pitch in after hours, on their own volition. This is the very spirit that makes open source what it is: a distributed group effort aimed at creating public good. While that goal is arguably more honorable than the goal of say, Macromedia, its built-in disadvantages are obvious. As far as I know, there is no single person in the Mozilla Foundation who can push through a feature set without the approval of many others. This is, of course, by design, but again, it carries its own disadvantages. There is also no open-source equivalent to a Macromedia manager awarding bonuses or extra stock grants to employees working overtime for a product launch. Since Mozilla participation is voluntary and only intrinsically rewarding, artificial motivation injection is severely sacrificed.

So you might be saying, “But you’re comparing Flash to a browser… that’s not fair”. Okay, well let’s look at Safari then. Safari took about a year and a half to become arguably the best browser in the world, on any platform. Why? Leadership and control. Dave Hyatt and the small team he worked with at Apple were able to take the little-used open-source KHTML rendering engine and set real objectives for it. Using the famous Steve Jobs mantra of “real artists ship”, Hyatt and his team not only improved the rendering engine itself by leaps and bounds but they built an entire framework for web browsing on OS X called WebKit. And on top of that, Apple’s improvements to KHTML were donated right back to the open-source community for use in other KHTML projects. Why did Apple do this? Because they realized that their strength on the web relies on interoperable, open standards. This is the opposite of Microsoft’s early stance that IE must contain technologies only available to it and not other browsers.

* I want to pause right here and make it clear that nothing in this article is meant as a knock against Mozilla, open source, or anybody involved in either. Firefox — now that it’s on the right track — is clearly the most important product in the browser world today and this article aims only to examine how to help speed its progress and adoption.

Transparency of operation

With each new version of any given browser, observable changes are made to the interface. New menu options are added. A new icon is sometimes designed and placed in a different place on the user’s computer than the old icon. Chrome looks different. Bookmarks are sometimes not carried over correctly. Toolbars and other customizations may be removed. The list goes on and on. Generally speaking, users hate change, and when change is introduced during an upgrade cycle, the first reaction in users is almost always negative. Sure, this feeling may subside once the user begins to appreciate the improvements, but it still creates “upgrade anxiety” as users are afraid of what changes any new browser will bring.

Compare this to a Flash upgrade. Flash is completely transparent to the user. There is no interface. In fact, this is what initially attracted me to Flash 2 back in the mid 90s. When a user upgrades Flash plug-ins, the only noticeable difference is that more robust applications are now available to them. And even then, applications which take advantage of the newest capabilities of Flash only begin trickling in over the next year or so. In other words, there is no shock, and hence there is no anxiety.

Ease of upgrade

Simply put, Flash has set the gold standard for software upgrades via the web. By marching to their own standard and using both the object and embed tags in a smart fashion, new versions of Flash are instantly and transparently available for PC IE users and pretty easily available to the rest of the world as well. A restart is usually not required, no user data is affected, and the size of the upgrade is anywhere from a few hundred kilobytes to about a megabyte in size. Over a broadband connection, the upgrade is usually complete from start-to-finish in a matter of seconds. Over a dialup connection, it may take a few minutes. But the most important aspect of the upgrade is that there is almost no possibility of confusion for the user. It feels more like getting a package in the mail than moving to a new city.

Browsers, on the other hand, are notorious for their difficult upgrade procedures. Now, when I say “difficult”, I don’t mean difficult to you and me. Any power user of web technologies and computer systems can figure out how to upgrade rather easily… and that is part of the reason we are always the first to upgrade. Take your average computer user, however. This is the person who thinks that little blue “e” icon on their desktop labeled “The Internet” actually is the internet. How many times have you heard the phrase “my internet won’t launch” or even from President Bush in the debates, “I hear there’s rumors on the Internets…” The fact is that part of the reason Internet Explorer has a 95% market share right now is that Microsoft has successfully reduced the concept of the internet down to a postage-stamp sized icon on your desktop.

You want to get people using Firefox? You need to do a lot more than just coerce them into clicking a “Download Now” link. You need to educate them on what a browser is and why it’s not a “different internet” but rather a different agent between them and the internet. It’s a tricky proposition because this revelation definitely adds a layer of complexity to a user’s perception of the internet, but at the same time, it’s an important layer to know about. It is an assistive layer, a navigational layer, and most importantly a protective layer. And so, the best way to introduce this layer is to succinctly spell out its advantages, as has been done very nicely on the Browse Happy site.

Isolation of the rendering engine

I separate a browser upgrade into two parts: The interface and the rendering engine. The interface is everything outside the viewport and the rendering engine is everything inside of it. For the most part, the interface is completely localized and does not materially affect the display of web content. It lets you do things like print a page, add a bookmark, or toggle between windows. The rendering engine, however, radically affects the display of web content and is codependent on things like HTML, CSS, and and Javascript in order to do its job. It is this sometimes-symbiotic, sometimes-antibiotic relationship between the rendering engine and the code we develop for it which causes the majority of headaches in web developers’ lives and retards web publishing by years at a time.

As much as I like tabbed browsing, more sophisticated autofill, and better bookmark organization, these are clearly user interface features within the browser itself and I’m happy letting users decide if and when to apply the upgrades which introduce them. But as for the rendering engine, I feel like this is the sort of thing that should be applied automatically every 1-2 years or at least pushed in front of the user in a persuasive yet polite way… a la Flash.

“We’ve noticed your browser needs to be quickly tuned. The necessary components have already been downloaded. Click here to tune-up.”

What got me thinking about this was one day several months ago when I launched my MSN Messenger client and was greeted with the message “Due to security upgrades, you must upgrade your version of MSN Messenger to 4.8 in order to log into the MSN Instant Messenger network”. Wow! As long as this doesn’t happen too often (it’s happened only once to me), it’s a spectacular way to get everyone on the same page. I bet Microsoft was able to upgrade their entire population of MSN IM clients within a month or two since the upgrade was more or less mandatory. Why do we settle for any less in browsers? If we could limit automatic updates to the rendering engine alone, users could scarcely argue that an upgrade was any sort of risk.

Furthermore, if this sort of reliable upgrade cycle started in the 90s, where would we be right now? CSS-7? We’re already at Flash 7. Look at all the progress that has been made between Flash 2 and Flash 7 and apply even 50% of that progress to the browser space. Would it cause more frequent redesign cycles for web sites? Sure. But all that does is create jobs in the industry and it is certainly a legitimate investment in technology. Instead, companies are spending their money producing commercials with sock puppets. Additionally, when HTML and CSS specs are written, they are usually written in such a way which does not infringe on existing sites. Therefore, a company may still wait however many extra years they want before redesigning… they will just be missing out on possible extra site features.

Setting the standards

The specter of automatic, benevolent rendering engine upgrades re-introduces the question of control. If Safari, Mozilla, Opera, and IE are to release upgrades around the same time which introduce roughly the same rendering engine enhancements, clearly one of two things needs to happen:

- The W3C needs to draft a Kyoto Treaty of sorts and convince all major players (currently 4) to apply W3C recommendations to their rendering engines on perhaps a biennial basis. The W3C would draft the specs, the browser makers would have a month or two to discuss it and offer revisions, and then the final spec would be delivered. The spec would never be considered a finished product and thus it would never be committeed to death in the pursuit of perfection. How is this different from what happens today? Easy. There is currently no agreement and there are no hard timelines. We are dealing in recommendations which may or may not manifest themselves at some point in the future and that is why we see such slow progress. If someone asked you right now when you’d expect multi-column text flow to be supported in 90% of browsers, what would you say? I couldn’t even make a guess. If someone asked me when they could expect alpha-maskable Flash video to be viewable by 90% of the population, I could almost tell them the exact month.

- Representatives from the four major players in the browser world could just get together and decide on this stuff themselves. I’m not talking about a room full of people. I’m talking about a tent full of people. Camp David style. If they were smart, they’d expand the group with a Doug Bowman, a Shaun Inman, or other persons of noble repute, but the group would have to stay intimately small to be effective. Additionally, there would be little tolerance for idealism if it impeded speed. XML purists need not apply. The WHATWG is the closest thing I see to this right now. They are a small group self-charged with readying specs for next-generation web applications, and in many cases, they are the exact people I’d want on this board — people with the power to walk into their companies and order immediate product changes.

Some people will look at these two options and wonder if all parties would actually agree to such things. Well, maybe not, but I’m pretty sure 3 out of 4 would agree to some degree of it, and that’s enough to eventually push the 4th into irrelevance or acquiescence. If the recent success of Safari and Firefox has shown us anything, it’s that “open” wins in the long run, and if you aren’t producing what the community wants, the community will eventually not want you.

Backwards compatibility

The bane of the web developer’s existence, in many ways, is backwards-compatibility. It is important, however, to separate the concepts of backwards-compatibility in browsers and backwards-compatibility in code. If Mozilla, Apple, Opera, or Microsoft were to release a new browser, it must clearly be backwards-compatible with existing web sites which use older coding standards or just very poor coding practices. Without this backwards-compatibility, no one would adopt a new browser. Both Mozilla and Opera have struggled with this issue over the past few years, with plenty of sites (well built or not) not displaying properly in them. But now that smart error-tolerances have been built in, Mozilla and Opera are a joy to use. Standards purists will say that new browser versions shouldn’t have to deal with error tolerances and that web developers should just always build error-free sites, and they are right… but they are also ignoring the reality that most web sites will not be 100% standards-compliant for quite some time, if ever. Validation aside, there is other error-correction necessary in browsers related to various CSS quirks and how they affect the display of content on page.

So, backwards-compatibility in browsers is a must, and it will likely always be. But what about backwards-compatibility in code? This is going to make some people mad, but I have to admit that I am not for 100% backwards compatibility in code, in perpetuity. Let’s say I have an audience of 100 people who visit my site. Let’s say if I code and design with method A, it will produce good pages viewable by all 100 people. Now let’s say that if I code and design with method B, it will produce GREAT pages viewable by 99 of the people. The one person who can’t view my pages is maybe clinging to their copy of Netscape 4. How many people would choose method A? I wouldn’t. I’d choose method B, because the aggregate amount of quality I’m producing for my audience is worth losing one audience member over. Now, my example is assuming a 99% success rate. What is the lowest you’d go? Clearly, different people have different thresholds. I think most people would fall squarely in the mid to upper 90s. 97%, 98%, 99%… around that area. So if you’re going to forsake 100% backwards compatibility in code, as I do, the whole goal of increasing the pace of web standards improvement becomes a lot more attainable. After all, as with Flash, it may only take months to reach the 90% penetration rate, but it may take a lifetime to reach 100%.

* In making a decision to drop support for certain user agents, it is obviously important to do your homework first. Different sites call for different practices. For example, an e-commerce site may lose a lot of money if only one customer cannot access the site… whereas an ad-supported site does not share this concern. This is part of the reason why the eBays and Amazons of the world still code the way they do.

In digesting these views on backwards-compatibility, please keep in mind that I am not advocating throwing user accessibility aside in any shape or fashion — only user agent accessibility. What does that mean? If a person cannot see a web page because their vision is impaired, I have sympathy for them. I want to design and code in such a way that helps them. But if a user cannot see a page because they are too unmotivated to stop using Netscape 4, I really don’t have any sympathy for them. A bit of pity, maybe, but definitely not sympathy. In fact, I feel like these people would be better off in the long run if every web site in the world turned them away at the door (as is starting to happen). Now, some people will just say “Build your site in such a way that it degrades gracefully in these sorts of browsers”. To that, I say fine, if your site is heavily text-oriented and stylistically sparse. But if you want to do things on your site which require some of the newer W3C standards and other interactive touches, the reality is that your site just may not degrade very well. Other people will say “Well just serve an unstyled page to these users”. Again, I say fine, but does anyone really enjoy sites with completely unstyled content? If you’re a layout-intense media site, your “unstyled” site is going to look and feel a lot worse than Yahoo’s intentionally sparse site, so the 1% of users who may see your unstyled page are probably better off going to Yahoo.

My point here is that I feel like for most sites, using modern coding standards with perhaps a tad less backwards-compatibility is okay to do. It’s your site. Why let such a tiny percentage of people dictate how you design and code it?

By the way, as a further footnote to this point, I am also not saying it’s ok to say things like “Okay, my audience is 95% PC IE so I’ll just code for that.” That is NOT the message. The message is, “Let’s speed the adoption rate of W3C standards and let’s also speed the rate at which we apply them on our own sites.” If that causes a few more people to upgrade browsers before they’d like to, so be it.

In most cases, you are providing your site to the world completely on your own volition. As much as people would like to believe otherwise, it is not the right of every citizen to be able to view it. If you wanted to, you could write your whole site in gibberish or you could password-protect the entire thing. It doesn’t matter. It’s your right to do so. The notable exception here is government agencies, who are required to provide certain information to all citizens within their region. The other notable exception, of course, is in matters of accessibility. Just as one should not discriminate on race, creed, color, or sex, one should not discriminate on disability. So before anyone comments on that, let’s be clear that when I suggest perhaps ditching some backwards-compatibility, accessibility is not part of what should get ditched. In fact, by adopting new accessibility standards, accessibility should actually be improved. Should we really be using all of these counter-intuitive image replacement techniques? With better standards and faster adoption of said standards, we can ditch the need for these tricks.

Next steps

So what can we do to help radically speed the adoption of better browsers and gradually raise the backwards-compatibility bar? Here are a few suggestions:

- Help spread Firefox to every computer you can get your hands on. I’m talking about your parents’ computers, your coworkers’ computers, computers in public spaces, etc. Don’t just download it. Install it, import everything you can, and place its icon where the old browser’s icon was. Obviously don’t remove any old browsers, but make it as easy as possible for users of said computer to subtly modify their browsing routine so that it goes through Firefox. In cases where education is necessary, take a few minutes to explain why switching is a good thing. Use hyperbole when necessary.

- Design and code your sites specifically with modern web standards in mind. Most of your testing, up through completion of the layout, should be done in standards-compliant browsers like Safari and Firefox, and then you can add whatever hacks you need after that to massage the layout into other browsers. I find these days that the only hack I generally even need is the underscore hack, which is extremely easy to implement.

- If you are faced with a choice between forward-looking code and backward-looking code, choose forward-looking in all cases except where a significant portion of your audience may be alienated. A quick example of this is the Netscape 4 example. If 1% of your audience uses Netscape 4 and designing a Netscape 4-friendly layout creates 30% more work and 30% more code and isn’t as pure as you’d like it, consider ditching Netscape 4 compatibility. Go ahead and serve them an unstyled page if you’d like, but don’t get hung up on how it looks.

- Continue to push the limits of the web and exert pressure on browser makers to continuously improve their products. I am so happy with how far Mozilla and Safari have come in the last couple of years and I’d love to see the momentum continue. Rendering current sites beautifully is a great start. Rendering future sites even more beautifully is the overriding goal. Give us drop-shadows for arbitrary objects. Give us multi-column text flow capabilities. Give us the ability to clear absolutely positioned divs. Without designers and developers clamoring for these improvements, we’ll enter another period of stagnation. Don’t settle for simple text-based bloggish layouts for everything you do. I, along with Shaun Inman, Tomas Jogin, and Mark Wubben, invented sIFR to give people richer, more beautiful typography on the web; but another reason we invented it was to show precisely how silly it is that it’s even necessary. Now that there are sites out there which make beautiful use of it, browser makers and standards bodies have at least a working model of what people are looking for.

- Create the Rapid Browser Improvement Delta Force (or R.B.I.D.F.) I mentioned earlier in the article. I’m serious when I say that I’d rather have a handful of representatives determine actionable browser improvements and then immediate act on them than wait for initiatives work their way through years of committees only to result in hopeful recommendations. Please know that this is not a knock on the incredible amount of thought and effort coming from these committees… it is just a realization that sometimes the more people who are involved in a decision and the more perfect these people try to make that decision, the slower things tend to move. Sometimes you don’t need perfect decisions… you just need helpful, swift ones. If anyone has suggestions for such a panel of people, please post them in the comments. 10 or less people sounds about right to me.

Conclusion

I realize that some of my thoughts on browser development are a bit naive (seeing as I have never developed one myself), but I’ve been in this industry long enough to know that the inability for us to use newer coding standards shortly after they are released is largely self-imposed. We settle for browsers which don’t upgrade their rendering engines transparently, we settle for specifications which take too long to pass through large committees, and we settle for coddling to the 1% of the population who doesn’t see fit to upgrade with the rest of us.

We need to quit settling.

Do your part by helping spread standards-compliant browsers like Firefox, Safari, and (sometimes) Opera to as many computers as possible. With a little bit of help, I think we can obliterate this ultra-conservative 10% prediction by the end of 2005. Don’t stop with browser proselytizing though. Gather usage statistics on your site and plan migration strategies for a move to more modern code. If you reckon you’ll be able to dump support for Browser X in 12 months, begin laying the groundwork right now. And finally, keep pushing the boundaries of web design and development so that the people up in the hills who write stuff into stone know that we’re not going to wait for what’s right when we can have what’s right now. We need to get web publishing back on the fast track, and by rapidly speeding both the evolution and the adoption of modern web standards, we can create an efficiency and innovation boom this medium has never seen.

Just curious: What do you think is a reasonable percentage to shoot for at the end of the 15-month period?

This one of the smartest solutions I’ve come across on how to speed the evolution of browsers. Makers of alternative browsers spend too much time pushing people to upgrade their browser because of the feature set (i.e., tabbed browsing, etc). The average web user gets way too comfortable with the software they use (especially one as ubiquitous as their web browser) that it is going to take a lot to get them to change. But if they could keep their interface and features, all the while the rendering engine is changed, almost transparently, web development would be pushed to a whole new level.

I’m interested to see what reasons browser developers might have that would prevent them from doing this.

Dan: I suspect the 10% number is a very conservative estimate, so that if by the end of 2005, they get even 11%, they can say they met their goal… but internally, I’d expect expectations to be quite a bit higher. Maybe in the 20-25% range.

What do I think we should shoot for though? How about maybe 2/3rds of how quickly Flash moves. That would be about 60% in 15 months.

The real challenge is convincing the people who see the blue “e” on their desktops that there is reason to use another browser.

Quite frankly, my mother could care less about Firefox’s superior rendering engine and standards support. She could care less about tabbed browsing. Even for many IE users, pop-up blocking is common. So that is a tough selling point.

So how do I sell it to the person who sees the internet the way we don’t? We see it as tags and selectors. My mom sees it the same way she sees the TV, only instead of a remote controll, she has a mouse and clicks on the screen.

I don’t have an answer to that.

There have been a few cases for me, where I have convinced people on the merits of Firefox’s superior security over IE. But even that doesn’t always work.

Jason, although IE’s security flaws are a good reason to make the switch, a more convincing reason is Firefox’s speed. Everyone that uses the internet wants more speed. I don’t know if there’s an actual stat for it, but it’s been my experience that Firefox is just that much faster.

macromedia is geared toward us geek developers whom they know is going to buy their stuff. That’s why their stuff increases so fast. Plus, the plugin pop-up has probably annoyed more folks than it should have, so the average user probably go tired of seeing it and just decided to install Flash…that was before people really knew what spyware was etc….

if folks saw a flash plugin pop-up screen for the first time today (meaning if flash just came out today) they’d probably be like “Ah spyware spyware, don’t download it!)

Now that everyone can trust flash and considering the average person has heard of it at least once or twice in their lifetime, they have no tiffs performing the upgrade….plus it takes like 2 seconds…

Browsers on the other hand take a while for the rest of the world to upgrade because it’s dependant upon the everyday common idiot to do it. It usually takes a computer savvy nephew to come down to ‘ol Uncle Jim’s basement and upgrade his browser choice. Even then, they’ll be like “What’s this program doing on my computer…where’s my internet.”

It does, doesn’t it.

Excellent point! Despite the great features that come along with FF, and the fact that I have about 10 different extensions along with it, I will be (very) much happier the day it supports CSS 3.0 and other greater techniques on the DOM etc…

ROFLOL! You know, at my Alma-mater University, I had heard some tech geek went into all the computer labs of the entire school and installed FF on every single one of them. They said it took about a month or so…but it happened over the summer when the labs were less cluttered with students writing mid-term papers etc. Overall they have about 500 computers on campus (not many for 30,000 studets – eh. it’s a state school).

Anyway, Good read, great points. I’m with ya.

Two interesting quotes here:

“Okay, well let’s look at Safari then. Safari took about a year and a half to become arguably the best browser in the world, on any platform.”

— could you tell me where I can download Safari for my Windows and Linux boxes? Sure there is Konquerer and the rendering engine in itunes but I always find the Apple statement about Safari being the best browser on any platform a bit laughable given it only runs on one platform! It’s a great browser and the best available for OSX, along with the Geckos and Opera.

How does Apple benchmark this? … and if it’s the best why doesn’t it have Adblock? – something to restore sanity and readablity at last :D

“The real challenge is convincing the people who see the blue “e” on their desktops that there is reason to use another browser.”

— after speaking to some users of websites I manage it could be more like this:

“The real challenge is convincing the people who see the blue “e” on their desktops that there is another browser.”

sample answers, and I’m not kidding here:

Q: What browser are you using?

A1:Windows

A2:XP

A3:<insert ISP name>

A4:Outlook Express

A5:I don’t know

Grass roots education is what is needed here to counter the ever present F.U.D

Cheers

James

That is a really good point with the seperate rendering engine upgrade. Not being able to do this just plain hurts the internet.

Your average user believes the application is the interface and only understands a browser upgrade in terms of interface improvements. It’s not until you start to use more than one browser that you learn that web pages are interpreted, not just viewed.

I’ve always been hesitant to upgrade to each new FireFox release because it breaks my extensions and even my themes. We need to stop telling people why they need to make the effort to upgrade and just remove the need for user effort.

Dan,

I agree that FireFox is faster than IE, but I don’t know that it is noticably faster than IE to the average user on current hardware. Maybe on older hardware it is more noticeable, and this is a good battleground.

There are a lot of users out there on older hardware, who are not even running Windows XP. Anyone on Windows versions eralier than XP, can’t get the latest security patches for IE. Advantage FireFox. FireFox probably runs better on older hardware than IE. Advantage FireFox again.

James:

“The best browser in the world, on any platform” is not the same as “The best browser in the world that works on any platform”. The former simply says that it provides the best browsing experience, period. The latter says that of all browsers which support all platforms, it provides the best browsing experience. These are two totally different things and the original statement certainly doesn’t imply Safari is available for Linux or Windows.

As for the statement itself, that’s me saying it at this point… not Apple. I say it because in my opinion, it’s true. It’s subjective, so no benchmarking is necessary. Safari has built in popup blocking… I don’t need AdBlock. In fact, I think the blocking of standard ad units acts against the economic best interests of consumers. If you don’t pay for your content by viewing ads, you will pay for it in other ways.

Regarding your multiple choice answers, you are absolutely right about that. That is exactly the sort of thing I see as well when asking browser-related questions to consumers. Sucks.

Mike your idea of seamless upgrades for our browsers is a fabulous idea!

I have been grappling with this idea for a while now. Which is also why I have stopped trying to get new people to install FF at the moment. I’m waiting for the 1.0 launch as I know that many people I may convince will not upgrade again until I vist them and “encourage” them.

This actually is my biggest issue with Safari, you have to upgrade your OS to upgrade the browser. This sucks, and when we hear that MS is going to do that with longhorn we all complain! This just bugs me because early versions of Safari had terrible js support and it is hard to test them on one mac.

Oh, lastly I have found two ofbest things to help get non-tech ie users to go to FF… find as you type, and the dictionarysearch extension…

Mike

Sorry, I thought you were referring to this:

http://www.apple.com/safari/

(http://images.apple.com/safari/images/indextop062203_01.gif)

It’s a great browser, but it’s not the best browser on any platform if it can’t run on every platform.

I know what you are getting at regarding UI but I think this (the content at the Apple link above) is just some marketing spiel from Apple. Which of course they are entitled to say given it is their browser……

would it be horrible to say safari is not the best browser even if it did run on multiple platforms?

I believe FF is more up to date on the render engine and displaying standard compliance code than safari is…

Footnote here, but IE runs *much* better on slower hardware. Of course, many people have tons of spyware and the rest of the crap that IE lets in, so when they make the switch, they are under the false impression that Firefox is faster. But, hey, we’ll take what we can get, right?

We should find a way to get users interested in new browsers. Right now, 99% of the time I get the same questions: why is firefox different? isn’t a browser a browser? why should it matter?

Just yesterday I was thinking What would be the coolest, mosty flashy thing that could happen to the Web today? No, not the latest Flash player, nor any 3D VR technology to surf the Web… No, off coarse not.

Nothing would have as much impact as having IE behave like one would expect… Full W3C XHTML/CSS support for one, and then some… Seems like Redmond is too caught up in Longhorn to realise this is where a lot of people have been waiting for, now!

»Nicht weil es schwer ist, wagen wir es nicht,

sondern weil wir es nicht wagen, ist es schwer.«

»Because it is not heavy, we do not dare it,

but because we do not dare it, it is heavy.«

Lucius Annaeus Seneca

Why should one give oneself with 10% contently?

Mike, you seem to be contradicting yourself (and trying very hard to paint yourself back out of your corner)

with regards to the first part: it’s not a question of who really “enjoys” sites which are completely unstyled, but that – depending on the specific user’s situation (e.g. they may only have access to an old battered Pentium I on a slow connection, running Windows 95, with Netscape 4.7 running because they can’t afford anything else) – it’s not just a matter of stubborn luddite refusal to upgrade. for these users, who may or may not be disabled as well, what’s better? at least making the pure content accessible to them, or simply shutting the door on them, as you so gleefully suggest? the whole idea of graceful degradation is such a cornerstone of the W3C specs, that i can’t really see how you can defend foregoing any care or thought for it. and no, we’re not talking about making amends to your css/xhtml so that something displays right in old browsers, but merely not just shutting users out based on their browser choice. if their visual experience is not perfect, but they can at the very least access the content, then that’s all that matters – and that falls under accessibility just as much.

moreover:

i love how you treat matters of accessibility as an issue that stands separate from backwards compatibility. in my mind, a complete non-sequitur. so you’re saying “my site is accessible, unless you’re using an older user agent in which case i’m not even going to bother sending you unstyled content, because, you know, you won’t enjoy it”. and no, it’s not just government agencies. legislation in many countries (the DDA in the UK, for instance) make accessibility mandatory for any business site. or think about the recent priceline.com / ramada.com decision in the US.

in conclusion, i feel that you started strongly, but then really cornered yourself with your argument, made sweeping generalisations about older user agents and ditching backwards compatibility, but then tried to save yourself by graciously saying “oh, but not when it comes to accessibility”. accessibility and backwards compatibility go hand in hand!

See Bug 267281 and feel free to add comments (but not just me too comments, only post if you have something to add to the discussion please). I submitted this request/bug today.

A fantastic idea Mike, and one that should be persued!

I think one of the best ways to increase the penetration of more modern browsers would be for ISPs to start including Firefox on their install CDs. Look at AOL. My dad doesn’t even know how to use IE because he just logs onto AOL and browses from there. He is surprised when he sees me on the home computer using Firefox to go on the internet. Even so when the latest version of AOL comes in the post it gets installed – there’s no pop-up that won’t go away like with Flash, but it’s a newer version so it must be better.

A CD in the post from your ISP with a (possibly brand-themed) install of Firefox and packing which encourages users to upgrade to a new client and touts some simple new features (faster browsing! more secure! prettier interface!) would win many converts. Users aren’t adverse to new features and better ways of using computers, they’re simply clueless as to what is possible and how to get it.

Mike Davidson blogged, “Again, I say fine, but does anyone really enjoy sites with completely unstyled content?”

For starters, that is a false dilemma. Seeing the content styled or unstyled aren’t the only two options. Given the choice between seeing unstyled content or not seeing content at all, the result is a no-brainer – I want to see the content.

Deliberately blocking users because you disagree with their choice/installation of browser is just ludicrous. I’m not asking for you to work miracles for someone who decides to use Netscape 4 – as long as you give them properly structured markup – that’s enough. Common sense is a permissable approach. If you want to go out of your way to prevent certain browsers from accessing your content – that is a step too far.

Don’t support older browser – but don’t wilfully stand in their way to accessing your content (styled or unstyled). No one even won a customer by arguing with them. Websites optimised to argue with visitors have no long term future.

You mention the example of Yahoo. If they want to render acceptably in Netscape 4, that is their choice. And the reason why no-one should advocate the deliberate blocking of particular browsers on nothing more than a whim.

It is clear to me that HTML, in its SGML form, has no future. It is a wasteland mainly because of the high tolerance browsers have for tag soup based markup. Browsers need to start implementing pure XML based browsers, and not accept invalid markup. Of course, the solution lies in running two rendering engines side-by-side in a “mode-switching” configuration. Perhaps pages delivered in an application/xhtml+xml mime-type could be strictly rendered as XML documents, whilst anything else is given to the cruft-supporting HTML engine. That’s where you need to draw the line.

Blocking out Netscape 4 users, but still allowing non-XHTML-supporting Internet Explorer holds no particular value or purpose. If you want to break from the past mistakes, realise backwards compatibility to cruft-compatible rendering engines is where the break needs to be made.

Mike, I thought your ideas regarding rendering engine upgrades was brilliant. Now that you mentioned it, it seems so obvious. Take that Netscape 4 user who refuses to upgrade. Why? Not because he likes that rendering engine better (esspecially as many designers today no longer catter to him – as you explained), but because all he sees is the interface. To most user’s thats how they see the browser.

If this Netscape 4 user gets a popup asking him to upgrade his browser, he resists because he knows the interface will change. Now, just tell him that a few updates need to be made so that the web pages work as intended, but not to worry, the browser will work, and look the same as no changes will be made to it – he just might go for that.

The problem I see is that at least some of the browsers made have never been designed in such a way that makes this possable. Take Firefox. All FF is is a rendering engine. The interface is a XUL application which is rendered by that very same engine (Ok, I may be oversimplifying things – but just play alone for arguments sake). Say some part of the interface relies on a certain rendering bug which is later fixed (I have no idea if such a situation exists). When the rendering engine alone is updated that part of the interface would be broken. Therefore an update to the interface would be required as well. If the user happens to be using a non-standard theme; that complicates matters even more as the author of that theme will need to first update his rendering engine, figure out what changed and how to fix it, make the appropriate changes, publish those changes and then hope everyone who uses that theme finds and downloads those changes only after updating their rendering engine.

Interestingly, at first I thought maybe FF’s design would make it the easiest browser to implement such a update stratigey, now I’m not so sure. Either way, it would certainly be nice to see browser makers work toward such an end. They would all render the same page the same way and could still compete in feature sets and interfaces as they do today. Actualy, as the rendering engines capabilities are never advertised to the average user, nothing would realy seem to change. But, oh how much better it would be!

Hey Mike,

When you calculate the length of development for Apple’s Safari, why not include the amount of time spent on KHTML before Apple picked it up? I mean, if they had to start from scratch, like the Konqueror folks did, it would have been a lot longer than 18 months, right?

Just a thought…

I agree with your writings mike. Two additions though:

– Update speed of the renderer will increase once Moz/FF has spread enough, but only on that platform. I don’t know if Mozilla will turn in on my default in the final version of 1.0, but Firefox already has quite a good update mechanism. This is clearly the way to go.

IE has windows update of course, but as there haven’t been any render changes in that camp for a few years, it’s been fairly useless up till now. That doesn’t mean however that it won’t be usefull in the future.

– As for code backwards compatibility: I can really advise IE7 to get IE rendering on the same level as modern browsers.

It not only makes coding for multiple browsers easier and faster, it also makes your code cleaner and more transparent as all the hacks/workarounds are applied afterwards. The latter also makes sure that it’s easy to remove or adjust when IE is finally updated.

Patrick and Isofarro:

There is no contradiction and there is no corner to back oneself out of. I said that if you’d like to serve unstyled content, go ahead. There is nothing wrong with that. There is also, however, nothing wrong with having a stricter policy on who gets in, as long as that policy is not based on a protected class like race, creed, disability, etc. “Netscape 4 users” are not a protected class, nor should they be. Nothing about them implies disability… in fact, I’d be willing to bet that for disabled users, that browser actually hurts things more than it helps. My argument does not preclude serving unstyled content to users… do it if you wish. But in the end, it is your site and you may do otherwise if you choose. My only point in this regard is that as long as you aren’t violating any laws, your user-agent policy is your choice.

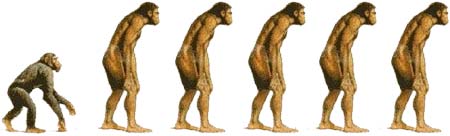

You also mentioned the Ramada/Priceline case. This case had absolutely nothing to do with the ability of these sites to be viewed in Netscape 4 or other such relics of browser evolution. It had everything to do with the sites not being accessible to screenreaders and other assistive technologies. These are two completely separate things, and trying to lump them into the same boat not only misses the point of the lawsuit but muddies the issue at hand as well. This is about fair treatment for the disabled… not fair treatment for users of obsolete technology. The latter is not an issue for the courts.

SO WELL SPOKEN!

sorry mike, but it is you who miss the point. why have a user agent policy at all? saying “netscape 4 users won’t get in” is not that far from saying “any users who don’t have a visual browser and access to a mouse won’t get in”. and the ramada/priceline example was mentioned because of your sweeping “unless it’s a government site” statement. but yes, whatever…

Patrick: You asked “Why have a user agent policy at all?” Simple. Education. When we started redirecting ESPN Netscape 4 users to a browser upgrade page about a year and a half ago, we were responsible for a huge batch of people upgrading their browsers. The fact is that with some users, the only way to get them to upgrade is to create an undeniable need. We felt honored to create this need and many users have thanked us for helping them see the light. If you don’t believe in such a policy for your own site, great… don’t have one. The attitude that somehow everyone’s policy must be the same as yours is one I don’t understand.

As for the Ramada/Priceline example “only being mentioned because of the sweeping claim”, how about reading that paragraph again please? There are two cases I mentioned, not one: government sites and in matters of accessibility. The latter addresses things like the Ramada lawsuit so let’s not pretend this article is about ditching accessibility.

“When we started redirecting ESPN Netscape 4 users to a browser upgrade page about a year and a half ago, we were responsible for a huge batch of people upgrading their browsers. The fact is that with some users, the only way to get them to upgrade is to create an undeniable need.”

ESPN still serves a lite page for old browsers though. That’s not a complete lock-out. I find a complete “you don’t deserve to view my pages” statement to be going a little too far, but ESPN’s version does nothing of the sort; it serves as more of a wake-up call for those who want/need it without being too restrictive.

And that page doesn’t let NN4 users get to the content does it?

Don’t get me wrong – I was a hardcore NN4 user right up to the point where Mozilla became viable (for me). Now we have lot’s of browsers viable to replace NN4, and those people should replace it. I mean, it’s been about 5 (or more) years since anything has been changed in the NN4-series renderer!

I don’t think you get Mike’s point though:

Disabled people don’t have a choice. They can’t use a normal browser.

NN4 users have a plethora of choice! They can use any normal browser, whatever platform they may be running.

So, though you can’t expect for disabled users to change their browser, you CAN, even must for NN4 users.

Unfortunately, there is a major difference between education and exclusion. There are better ways of educating. Besides, I thought the whole point was browser evolution, but browser exclusion?

akaxaka wrote:

so what do they use? a special disabled browser? well, no. let’s see:

now, particularly for this last group, screenreader software is expensive. very expensive. some users have to make do with older versions, as they just can’t afford to upgrade all the time. and some of these older versions may only work with older browsers.

but yes, my main point here: users with disabilities use the same kind of browsers “normal” people use.

oh, and just for info:

the answer is: yes, of course it does. notice the “Or if you’d like to view ESPN’s lite site for older browsers and WebTV, click here.” link when you access the main ESPN site via NN4.x. as vinnie mentions above, this is ok-ish…but outright blocking (which, even if mike didn’t necessarily advocate as the only solution, some readers like yourself here have obviously picked up) isn’t.

Dotjay: An essential cog of evolution is extinction. A species which doesn’t adapt to the world around it eventually finds itself extinct. Browsers are no different in this regard. Let nature run its course.

akaxaka:

patrick pretty much summed up what I was going to say. However, here’s another twist: sometimes users don’t have a choice. Believe it or not, there are still organizations standardized on NN4 out there. If I recall correctly, NASA even had a majority of NN4 users as of maybe a year ago. It’s not always a choice that people can make. Why outright exclude them?

Also, the URL for “ESPN lite” for version 4 browsers:

http://lite.espn.go.com/

to stretch the analogy to breaking point, though: extinction happens naturally – just as, one at a time, users with older browsers may or may not upgrade because they may or may not “enjoy” content without presentational bells and whistles. explicitly blocking user agents is more akin to an intervention of a higher entity (god or whatever) wilfully (and by design) making living conditions impossible for a particular older species. let nature run its course = don’t block user agents.

Patrick: Everything happens naturally. It doesn’t matter if it’s caused by humans, animals, God, or whatever. If it happens in the world, it’s natural. As conditions change, species must adapt… that is the only constant. If they do not adapt, they cannot survive.

Any redirection I ever employ on any site is client-side (javascript), so if someone really wants to hang on to their copy of Netscape 4, they need only disable javascript and they’ll be fine.

Mike-

Finally got around to reading this. Great read. All of your “next steps” are great, but my (somewhat pessimistic) view is that it will be a very, very long time before we can even count on 90% of the population using browser that have the capabilities of Safari and FireFox do today. The only way I really see this cold, hard fact changing is if your first suggestion happens — that core members of each of the four parties at hand here get themselves together in a room and beat each other up until we come up with some kind of standards. Yes, standards. Like, ones that we can actually rely on because all the major players have agreed to support them.

I also think your separation of the rendering engine and the browser is key. I’ve often wondered what the impact would be if Apple offered a version of Safari for Windows — or even just the WebKit. I think that the browser will become less important as the rendering engine itself is implemented into apps and even operating systems (see iTunes, NetNewsWire, etc.). If WebKet and Gecko are the best and simplest rendering engines to integrate into your apps, developers may find that their adoption will contribute to the irrelevance of IE.

But who knows.

While this is true, I think it’s largely irrelevant on the timescale browsers turn around in.

I’m sorry, but accessibility, for me, is about making things available to the widest possible audience, accommodating a spectrum of ability. Your NN4 users may be a minority, but it’s likely that so are your users who are colour-blind. You try not to exclude people who have such visual impairments though, so why exclude browsers with a “disability”?

To me, completely excluding a browser for the sake of progress/evolution, is like excluding someone who is red-green colour-blind in the hope that they will become extinct, or that research will find a cure faster.

Maybe I am also taking analogies to the extreme, but it’s about the best way I can explain how I see it.

You said it yourself, you are taking analogies to the extreme dotjay.

And then you some up some impairments that don’t limit their choice of browser.

The only real issue are screenreaders: They (as far as I know) all use IE, because IE is omnipresent. So that pulls NN4 out of the equation. Although, if you can bring up examples of screenreaders that are still in widespread use.

Vinnie:

I know there are companies that standardise on NN4, but in that case the IT department has more than enough choice and if enough people complain they’ll update (even if it’s to IE, it’s still better).

Of course, when developing Intranet sites for them, you first complain about NN4 and if nothing can be done, you just design for NN4 (and enjoy other luxuries, like multicol).

PS. When I talk about not developing for certain browsers, I’m talking about ones where it’s reasonble to have people upgrade from (like NN4, Opera6>, etc.).

Also, there is quite a big difference in giving people the unstyled look immidiately or giving them a “Your browser is too old” page and then letting them click a warning before entering. In the first instance, people expect the best and get ruff uncut stuff, when in the latter instance they’re gratefull for everything they get.

Note: When sniffing for Ye Olde Browsers, always sniff by ability, not brand. We all (should) know what happend when everybody started blocking netscape (4) so netscape (6/7) wouldn’t be allowed in either.

hmm, I like the AOL comparison. AOL updates itself quite often, they do little code tweeks, but they save the UI changes to major versions.

Perhaps FF could adopt a similar idea, im quite suprised there isnt a “silent update” for the geko part of things keep everyone ticking on the same code.

Major updates could then be offered to the user, with screenshots may I add so they could visually see how the UI would change.

If AOL were to force its users to swap the IE engine for geko, we would see dramatic increase in standards compliant browsers. The UI doesent have to change, the users would be “none the wiser” other than the fact that sites might actually render correctly.

If the Trillions of AOL users were to start using the de facto web-standards it might actually urge people to “get there arse into gear” in regards to the spec.

Bit of British slang for ya, but I hope you get the idea.

I think most of the people that have commented on here that think this is a great idea miss some of the realities of actually pulling this off.

First, even if you have a small group of individuals who decide what standards to implement and then go back and implement ONLY and ALL of those standards, that magically things will work perfectly. Nevermind that these smaller groups may misinterpret the standards and implement them differently. Nevermind that bugs would still get introduced across each implementation requiring still another slew of hacks to have have everything work cross-browser. What you need is the same rendering engine for every browser, for every platform. That’s what Flash is. That’s what Flash does.

Then there’s the issue of backwards compatibility in regards to the rendering engine (backwards compatibility of the interface is less of an issue). One of the reasons why Microsoft hasn’t moved dramatically forward with rendering engine changes has to do with how it gets used. It’s not just the browser. It powers a lot of the elements of the OS. Developers build applications on top of it. Microsoft is dedicated to its current user base and does extensive testing on multiple versions of its OS and in the various applications that run on top of it to make sure that updates make a minimal impact to the end user. This is why it takes so long for updates to come out. I can tell you right now that I’d be mighty pissed if something I developed stopped working 6 months from now.

So, what does Firefox have going for it? Certainly the fact that it’s cross-platform. From a developers point of view, it’s nice to know that we could possibly develop an application and have it work as expected on any OS. That’s what we expect of Flash. Mozilla needs to push the rendering engine as a platform for application development.

Anyways, I’ve rambled on enough… As mentioned, we should install it on any machine we see. Once 1.0 comes out, I know I will be. :)

I completely agree with you about the browser upgrades, it should be seemless and just happen without requiring too much effort from the user. Firefox extensions that break with version upgrades are just one of the things I find complicated about upgrading, that needs to be sorted out, and extensions should just work.

I also agree with most of what you said about backwards compatibility, and dropping support for older browsers. In fact, I’ve already dropped support for IE on my site, but accessibility must be maintained for all browsers… even NN4!

However, I do not agree with your rapid development approach to creating new standards. Though, I agree that standards and recommendations do need to be implemented faster in browsers, much time and care needs to be taken when actually writing the specifications. Just consider the fact that during the browser wars it’s likely that both the IE and NN development teams comprised probably around 10 or so developers each (just as you propose for this R.B.I.D.F. idea), that were brainstorming ideas, thinking about what consumers and developers wanted and implementing them as fast as they could. Now just look at the <blink>ing <marquee> mess that got us into! The standardisation process may be slow, but it is slow for a reason — so that big mistakes can be mostly avoided, and only the best ideas are kept.

Personally, I have a bad feeling about the WHATWG. There are so many extensions being added, so quickly; it’s difficult to see how much of it will be a welcomed improvement, and how much will not. My small glimmer of hope for the WHATWG comes from the fact that it’s being run by Hixie, a man whom I have great deal of respect and admiration for, and that there are plans to take their work to a standards organisation, such as the W3C.

Speaking of Mike’s points regarding the ease of upgrading Flash versus the ease of upgrading your browser:

I think this is why users of Linux and Macs have and will continue to have a superior web experience than the average Windows user. Most Linux distros can automatically update components and packages, so that the user, with little or no intervention, can always be using the latest versions of Firefox or Konqueror. The speed at which these two browsers are updated and improved is impressive.

Likewise, Mac users can have Safari automatically updated along with other system components.

If Mozilla/Firefox or Opera for Windows could only build in an auto-updating feature, that would be a remarkable step for web browser technology.

Oh, and on the topic of the best browser, I say it’s a toss-up between Safari and Omniweb. Omniweb has the same renderer and incredible features, but it’s a little slower. Really, though, all the modern browsers except IE and Opera for Linux are great. Each has many advantages and one or two trade-offs.

I’m surprised with all this “GET FIREFOX!!!” stuff. Why? Opera is vastly superior to FF in virtually every aspect (especially the UI and general user-friendlyness), save for the two or three FF extensions that are pretty neat. On top of that, Opera’s rendering engine is far, far more advanced than that of FF. Opera runs faster, is a smaller download, more configurable, has some other applications built-in, is way better for older people who need to enlarge web pages… No, it’s not free, but I still have to see a person who can’t live with a tiny banner if they’re not willing to pay for their copy.

Re: updating browser/updating Flash.

I just upgraded to Mozilla Firefox 1.0 RC2 (I was on RC 1 before). I think it took about 7 mouseclicks to get it installed.

While I was reading, I remembered that I had to reinstall Flash in it. I started the Flash installer (it’s on my hard disk), clicked about three times, and you know what it did? It killed my browser, with may be 10 tabs in it, installed Flash and then happily started it again (without the tabs, had to backtrack in the history sidetab).

Tell me again how the Flash user experience is better?

I thought this was already the case. Firstly, modern browsers render pages differently depending on the ‘doctype’ used, giving ‘Standards’ or ‘Quirks’ Mode. Also if you render a page sent with “application/xhtml+xml” mime-type and it contains an error, the whole page will stop (a requirement of XML) in Opera 7. Whereas the “text/html” mime type allows missing end tags and other faults to be parsed.

Regarding the main article here, it was well written. But the main problem is always IE6. Even if a group of browser makers got together (as you suggest) do you really think Microsoft would be willing to upgrade their browser as fast as Mozilla would? They are only interested in selling Longhorn.

The only possible solution is a browser based on Flash. I keep waiting for this miracle to happen. (Use any font! Today! Scalable layouts to fit all screens!) Macromedia have already taken Flash way beyond a mere vector display routine – just look at how it can interact with XML and MP3 files now.

One last thing to mention: if you’ve ever trawled through the thousands of bugs listed on Bugzilla (Mozilla’s bug database) you’ll realise that it is a major effort to code a browser. There are so many combinations of layout to cater for. Different plugins add to this. Then think about JavaScript and dynamic content using the DOM method. To me it’s clear why implementing new code takes so long. I too wish for CSS3, but we haven’t even got 100% CSS2.1 compliance yet, have we? Because each new part needs to be tested and tested until it is bug free.

When the whole debate arose about whether web browsing should be part of the OS or not, I thought that today’s web browser should be broken up into the following OS services:

(Not sure if security would be better separated out as separate service; I currently lumped in the HTTP-related aspects with the HTTP client service.)

Ideally, at least the first two services would be replaceable by any vendor’s implementation (and even more ideally, a power user would be able to to switch back and forth between different implementations, perhaps on both a vendor and version basis). A Web browser would make use of all three services, providing a user interface for browsing. A web services client could use just the HTTP client service. Everyday application could implement its interface using HTML and just use the rendering service.

Unfortunately, the various judicial rulings never got beyond the level of “should the browser as a whole be in the OS or not”.

Yo,

Apple’s KHTML improvements are useless to Kdevs, see dot.kde.org for info…..ergo Apple has contributed nothing to khtml.

I’d just like to point out that FireFox already has auto-update functionality built in. Under Tools > Options > Advanced there is an option to periodically check for software updates. I don’t know quite how periodic this is, but I know it works – I just used it to upgrade to 1.0RC2. I’d like to see it moved so its right there in the tools or help menu personally, but thats by the by.

If only they’d put it into the rest of their software…

Hmm?? btw WHat’s wrong with Opera for Linux, BTW? I always use it on whatever Linux system I’m on, because it performs much better than Mozilla on Linux (due to gtk/xul I think)…

Chris Hester:

There are actually people currently working on this. Enter DENG. Still not ready for prime-time but pretty impressive IMO.

On the topic of separating a browser’s rendering engine from the GUI (which is absolute brilliance Mike), it would seem that Apple is already doing this with Safari (or at least really close to doing it). If you check out the Safari user agent string, AppleWebKit and Safari have separate version numbers.

It’s just a shame they’re pre-empting MS’ mistake of tying the render engine version to the OS version…

Isn’t the only way to get a new version of Safari (i.e. 1.2 to 1.3) to upgrade OSX as well though?

That’s both misleading and unfair.

Apple makes the source available with WebCore which fulfills the requirement of the license. If you actually read the lists you’d see comments like this one by Leo Savernik on kfm-devel on Sept 22, 2004:

The problem is in part that Apple provides too many changes and KDE has too few resources to deal with them. There is no question that Apple could do more (fund the Konqeror project, donate money, provide patches), but they do follow the license and that is really the most important thing.

Great idea Mike, however why not take it even further by having one universal rendering engine?

This should be a development of the W3C’s Amaya project into a WebKit-style piece of open source software which any browser maker could integrate into their browser.

Imagine a world where websites rendered *exactly* the same on every OS and browser because the rendering engine was the same!

For browser makers it would mean zero time spent on the rendering engine and more time & budget for developing the features of the browser. For the W3C it would make developing standards instantaneous. For users it would mean a better web experience without sacrificing the features of their favourite browsers. And of course for designers and developers it would be Nirvana!

I like the Cascadia button.

Might have to get myself own of those CAS bumper stickers.

One point no one seems to mention is that accessibility can also refer to the developing world, where many of our cast-off computers seem to land. Where connect speeds are most likely sloooow. Where there’s a thirst for knowledge, but not necessarily the possibility of using a modern browser. And where the numbers of potential viewers is huge.

I was discussing a recent accessibility showcase in Washington DC with a client in Geneva, who totally misunderstood what I meant when I said ‘accessibility’, because their website aims to be accessible to clients all over the developing world. While internet access is pretty good in Latin America and many areas of Asia, that’s not the case in Africa, with certain exceptions.

And I’ve been discussing what level of browser to shoot for with a local client (a musical group), but without realizing that Firefox isn’t being developed for Mac OS 9, which they use. Now I realize that in redesigning their new site, I’ll have to take that into account… unless everyone thinks I should fire the client since their computer isn’t up-to-date!

I’m not saying I don’t wish for 100% compliance to web standards tomorrow (better yet today), but I just want to remind everyone that millions and maybe billions of people may be shut out in addition to the disabled population if we act rashly.

By the way, Olly, in Mac Firefox 1.0 preview release, the automatic update choice is in preferences, not tools.

Interesting, but I think you have a flawed premise. You define “best” as an absolute, and use Safari as an example (“the best browser in the world”). But “best” is a relative; what it means depends on the context in which it’s used. For Microsoft, or for AOL, “best” means “fits our commercial goals”. Which include trying to ensure that users of their browsers remain users of their browsers. The web is already full of IE-only pages; what possible incentive is there for Microsoft to particpate in your Camp David-style interaction? Note here that I’m *not* in any way accusing them of venality, or anything worse than established capitalist self-interest, which is what they exist *for*. It’s the duty they owe their shareholders and operating against that self-interest might even open them up for shareholder lawsuits.

Wild extrapolations aside; “best” also has a meaning for the huge majority of end-users, those people who are not interested at all in the technical issues behind the web and who want it to “just work”. For them, “best” means “what works for me in the widest set of cases with the minimal effort and decision-making on my part”. They don’t care; choosing a browser is nothing like choosing a car, or a house or any other item for which the act of choosing is a big part of the fun. They just want to get to their websites and not be faced with oddly rendered or unsupported pages. For them, “best” means “what comes with my system” until it breaks. But it won’t break, because the web favours it amongst all browsers. It’s a positive feedback thing.

Maybe we can wish that it ain’t so. But it is.

First of all, thanks to everyone for the detailed and thoughtful comments on this post so far. Apologies for not participating more heavily… just been a bit busy. I would like to make a few clarifications for the record:

1. With regards to Safari being “arguably the best browser on any platform” — there is a reason I put the word “arguably” in there. It’s personal preference and I’m not saying one way or the other if I personally think it’s best. And besides, that’s really not the point of this post. It doesn’t matter whether Safari, Firefox, or Opera is the best… it just matters that together, they are clearly the best. The focus should be on getting everyone we know to use one of the three.

2. The point about sending people with outdated browsers to an upgrade page is also not central to the article. My stance on this is soft, and I think however you want to do it is up to you. I personally think the best policy is to redirect to an upgrade page but then including a “Let me in anyway” link which cookies the user. Now… that said, I don’t think one should necessarily pay too much attention to the visual experience for people with obsolete browsers. My main point here is that if your demographics call for it, use modern code, and those who use obsolete user agents can either upgrade or deal with a little ugliness.

3. Glad everyone seems to like the separate of the rendering engine theory. As someone mentioned, Apple is starting to do this a bit already, but unfortunately they seem to be tying this to OS upgrades. Bad.

Actually, seeds of Safari 1.3 for Panther incorporate rendering improvements from Safari 2.0 for Tiger.

Safari 1.3 and Safari 2.0 have the same WebKit. The only difference is new Tiger UI features are only present in 2.0. Reading between the lines, it seems pretty clear that Apple is going to release the new WebKit on both Panther and Tiger and not tie it to a single OS version.

I totally agree with the need for “grass roots” education of the users. I too have asked all my friends and relatives whey they still use IE and most reply “what’s IE?”

So, where can I find a resource of short, to the point, simple to understand articles/fact sheets that I can pass on to these people?

“every time a new version of the Flash plug-in is released, we get a predictable 80-90% penetration rate at the 15 month mark”

Where did you get this information from?

These numbers are overly optimistic. Do not trust the marketing-talk on Macromedia’s site.

heinzkunz: I can verify that the numbers Macromedia provides are accurate. I’ve been personally tracking Flash penetration at ESPN.com for 4 years now and my numbers are almost identical to Macromedia’s. By the way, there is no reason to be suspicious of their survey results. The surveys are conducted by NPD (the most reputable such firm in the world) and the methodology is such that actual users are measured, as opposed to what Apple and Microsoft do which is just to measure “total downloads”.

80-90% of Flash at the 15 month mark is entirely accurate.

I know this debate started back in November 2004, but to add a comment to this, I still actually use IE. I have been using Firefox, and to be honest, I think it is worse than any of them! Every time a Firefox browser loads, my PC runs extreemly slow until I close it again. As sometimes I run two browsers side by side (in which the tabbed method wouldn’t be very helpful), I am forced to return to IE. Opening 2 or more browser windows almost makes my PC come to a stand-still!

I have tried Netscape, but find it has a totally horrible interface that I do not find very friendly. Once again I am back to IE as I do not see much point even trying Opera. I know that IE has many security risks, however I find that with SP2, you get the pop-up blocker, I have software to combat spyware and viruses, etc. But still IE, for me is the best option and the better browser… Microsoft just need to get some decent programmers who can actually make software and find their own bugs and risks like most other programmers do!

Wow, so your article was written well over a half year ago, but sadly the problem hasn’t been solved yet.

The answer I get when asking why folks keep using IE is: »Duh, it works just fine.« And I think that’s another problem that webdevelopers themselves create: there are a lot of sites out there using JavaScripts, table layouts and lots of ugly stuff just so IE renders their site correctly (to do hover:anything, for instance). Why? Nobody does that for NN4 anymore (or do they?), but because IE still has a high marketshare everyone »optimizes« for it and the user doesn’t even notice that he or she is using an obsolete browser. And IE keeps its marketshare. It’s a viscious circle, really.

An interesting announcement by an IE7 developer sheds some light on the next big browser we’ll need to support. Most of what I read here is good news but I am a bit disappointed with the fact IE7 will be wound so tightly into only the new Vista OS. It would have been better if Win2k, XP (early service packs) would be able to download the browser when it’s released. Now it will take a few extra years at least to weed out IE6 since many people like myself have been happy with Win2k as a development platform. I have no idea if IE7 will be able to port to Mac but I doubt it

I have a feeling Longhorn/Vista and Microsoft in general are making a move towards making it easier and more automatic for computer novices to upgrade software. I HOPE so at any rate! Guess we’ll have to wait and see.

Dan J, if you ever check this page again, I have used FireFox a lot lately and I know what you mean about it being slow. But mostly this is just the overhead from the software loading. Once FF loads you can browse around with no problems and some pages seem to load much faster then IE for me. I notice IE lags when there is a large amount of content with many positioned boxes. Don’t forget IE loads extra fast because of the way MS has it integrated into the OS. Some of the resources it needs are loaded at startup. The trade off for such seemingly fast loading MS software is a nice long wait for the system to boot up!

P.S. I have loved Flash since version 2 but it was improvements that were made for Flash 3 that really made it a super smash success rather then just a 1 hit wonder. After that I realized that you could rely Macromedia to take it in the right direction. You can’t possibly argue with the numbers in regards to upgrades. They managed to make the upgrades quick, automatic, define a new standard and for the most part never even require the browser to be reloaded or system to be rebooted. A recipe for success. Even on Netscape upgrading was fairly painless.

P.P.S. The seperate rendering engine view is a must. I have always complained on the part of IE, with ALL of their security updates that have gone out they never included patches for known bugs. Wadda Sin!

For a start, I’d have you on the Delta Force commitee. You talk a lot of sense. I’m new to this design thing and I’m still learning all the little tricks to make the stuff I do IE freindly. It annoys me that I can design something that’ll validate for CSS and XHTML first time, and yet not work in IE. Why should I have have to write garbage to get my sites to work in the browser that 80 odd 90% of the world uses?

My worry is that Microsoft will never sit down on your “Delta Force Commitee” and therefore render it a bit meaningless. Like it or not Microsoft do currently rule the roost in terms of user base.

The fact that the Box Model Hack and the Underscore hack and the plethora of other little hacks exist at all says to me that the biggest browser company in the world could care less about the new web standards. If they don’t care, then the rest of us are screwed for a while to come.

Hopefully, enough people will start to use standards complient browsers and Microsoft will stand up and take notice….

I’m not holding my breath for this to happen though.

As for my recomendations for the commitee, I’d like to name the usual suspects, Zeldman, Meyer, Bowman for the expertise, a representative from each of the 4 main browser houses, yourself, because it’s your baby and it makes sense, Tim Berners-Lee, Just because… and someone like me, a newcomer to it it all who’d just like a standard that meant something. Something they could learn to do the job from, and could stop the rest of them talking in words only a guy with a degree can understand.

End of rant, sorry to take up everyone’s valuable time.

The part you were speaking of in backwords compatiblity in code is how I feel to a T.